This worked perfectly, thanks a lot! I’ll update the main post.

I take my shitposts very seriously.

This worked perfectly, thanks a lot! I’ll update the main post.

I’ll try it as soon as I get home.

It makes sense, since Wine uses SDL2 to emulate XInput.

(edit) I’m cautiously optimistic. I’ve just tried this on my work PC (without SISR):

SDL_GAMECONTROLLER_IGNORE_DEVICES='0x28de/0x1304' wine control joy.cpl

…and XInput doesn’t detect the SC. It should still receive inputs from the emulated device since it uses different a different PID/VID.

Are there any good indie games on the world’s largest video game store? I dunno, are there any leafy trees in the Amazon?

From what I’ve been playing: Stardew Valley, Factorio, Vampire Survivors, Derail Valley, A Hat In Time, Project Wingman, Frostpunk 1 and 2, Portal 2 (technically self-published), Signalis. Voices Of The Void will eventually have a Steam release. All of those games work well on Linux.

Stakeholders. Journalists. The market. The ignorant public. They’re constructing a narrative to shield themselves and minimize the hit to their reputation when they stop offering lifetime license plans. The announcement won’t look nearly as damning if it contains a reference to the falling number of new lifetime customers, even if it omits the context of why that number has been falling.

From a purely profit-oriented perspective, no. They’re setting up a pretext to eliminate the lifetime license plan due to a lack of interest. No sane person would pay that kind of lump sum for the service (and the insane ones will bring in triple the revenue), so they’ll claim that there is no market for it. After that, they’re free to crank up the periodic subscription prices.

Never attribute to stupidity that which is adequately explained by profiteering opportunism.

Yes, it’s recognised as a controller both in non-Steam games and in other applications like KDE Settings. It works just like any other controller with the usual, quasi-standard inputs (analog sticks, face buttons, etc). Steam support regarding non-Steam games:

Everything should work as intended if you have purchased and launched your game directly through Steam, but in many cases you will also be able to use the Controller with non-Steam games that run independently.

I’ve heard the argument that it is recognized as a KBM if you’re not on Steam.

If Steam isn’t running and there are no other games that capture the controller input, the SC enters “lizard mode” where it emulates mouse and certain keyboard inputs. The right touchpad becomes a mouse, the left touchpad becomes a scroll wheel, R2 is left click, L2 is right click, A is Enter, B is Escape; wev displays the correct input events. Lizard mode is disabled when you launch a game.

(edit) It sounds like this only works in Linux. Windows needs a separate utility to use the SC with non-Steam games.

(edit 2) This is what KDE reports:

It can detect the back buttons (Paddle 1-4) and the quick access menu (Miscellaneous). hid-recorder also shows that all other inputs are also available through the /dev/hidraw* device. I wouldn’t be surprised at all if someone released a standalone Steam Input emulator app within a few weeks.

That’s not true. If you don’t use Steam Input, you only lose the Steam-specific inputs, like the touchpads, gyro, grip sense, and back buttons. Otherwise it is pretty much equivalent to a modern Xbox controller:

I’ve played a lot of Project Wingman with it, and the TMR inputs are actually a massive improvement.

You grossly overestimate the number of people who are both willing and able to deploy, secure, manage, and maintain this kind of infrastructure. You may not find any value in offloading these responsibilities to a service provider operated by trained professionals, but your outright refusal to acknowledge that other people might is nothing short of callous.

Not having to configure a separate utility is part of the user-friendliness

edit: this is way funnier with the original title: Your containers are leaking (and how to plug the holes)

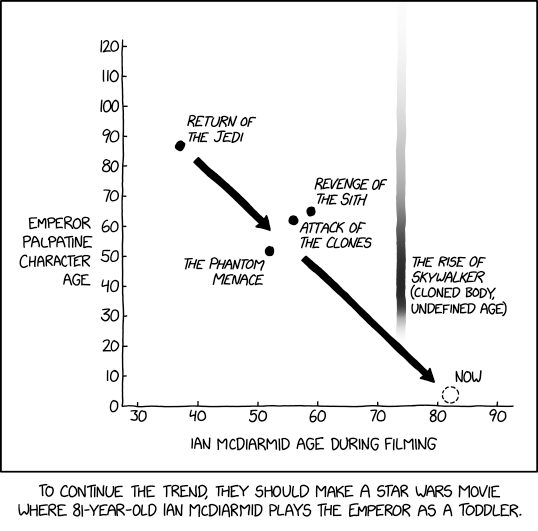

Is that before or after Emperor Palpatine’s cameo in Rugrats?

Open config.php and look for the entry named trusted_domains. Make sure it contains both the domain name and the local IP address:

'trusted_domains' => array(

0 => 'nextcloud.your.domain', // the public FQDN

1 => '172.22.?.?', // the local IP address

2 => '...', // other addresses, like if you're using a VPN

),

If the web app is opened using an address or DNS name that isn’t included in this list, the browser will connect, but the app will refuse to work.

Nevermind, I completely overlooked that the service is Opencloud, not Nextcloud. Nevertheless, you should investigate whether Opencloud has an equivalent config variable.

https://tailscale.com/docs/how-to/set-up-https-certificates#machine-names-in-the-public-ledger

Your machine names and tailnet domain name will be added to a list that is publicly accessible when a new certificate is issued to one of your machines. CT is meant to verify, through one or multiple third parties, that a certificate was issued to a particular DNS name. This isn’t unique to Tailscale – all other CAs do this, and modern browsers will refuse to connect to websites if they can’t verify the certificate through at least one CT ledger.

This doesn’t expose your systems any more than getting a DNS entry and a certificate from other sources. If you don’t want your tailnet and machine names out in the public, you’ll have to use self-signed certs and self-hosted HTTPS-capable servers or proxies.

Right at this moment, I’m rebuilding my homelab after a double HDD failure earlier this year.

The previous build had a RAID 5 array of three 1TB Seagate Barracudas that I picked out of the scrap pile at work. I knew what I was getting into and only kept replaceable files on it. When one of the drives started doing the death rattle, I decided to yank some harder-to-acquire files to my 3TB desktop HDD before trying to resilver the entire array. Guess which device was the next to fail. I could mount and read it, but every operation took 2-5 minutes. SMART showed a reallocation count in the thousands. That drive contained some important files that I couldn’t replace, which were backed up to the (now dead) server. Fortunately ddrescue managed to recover damn near everything and I only lost 80 kilobytes out of the entire disk. That was a very expensive lesson that I’ve learned very cheaply.

The new setup has a RAIDz1 pool of 3x 4TB Ironwolf disks (constrained by the available SATA sockets on the motherboard), plus a new SSD for the OS and 16GB RAM (upgraded from literally the first SSD I ever bought and 10GB mis-matched DDR3).

Mounting it was a bit of a dilemma. The previous array was simply mounted to the filesystem from fstab and bind-mounted to the containers. I definitely wanted the storage to be managed from Proxmox’s web UI and to be able to create VDs and LXC volumes on it. Some community members helped me choose ZFS over LVM-on-RAID5. Setting up the correct permissions wasn’t as much of a headache as last time. I’ve just managed to get a Samba+NFS+HTTP file server and Jellyfin running and talking to each other. Forgejo and Nextcloud will be next.

ZFS uses the RAM intensively for caching operations. Way more than traditional filesystems. The recommended cache size is 2 GB plus 1 GB per terabyte of capacity. For my server, that would be three quarters of the RAM dedicated entirely to the filesystem.

Read my comment again, it has the answer. Most VPN services do not provide end-to-end tunnelling. If the exit node is located outside Russia, then what enters the Russian internet will be simple HTTPS traffic.

Been running it from Russia where stock WireGuard stopped working mid-2025.

Sounds like the issue is ISPs within Russia blocking outgoing Wireguard traffic from customers.

If the traffic exits the tunnel without hitting a Russian ISP (e.g. a Mullvad exit node in Sweden that routes the unencrypted traffic to the destination), you won’t be affected. If the exit node is behind a Russian ISP, it might get filtered by DPI depending on which direction is subject to the filter.

It’s problematic, but possible: https://jamesguthrie.ch/blog/multi-tailnet-unlocking-access-to-multiple-tailscale-networks/

If the other person has a Tailscale account, it sounds like the most expedient method is to simply invite them to the tailnet as a non-admin user with strict access control.

You could share a node with an outside user, but I don’t know how much the quarantine would affect its functionality. You could also use Funnel to expose the node to the internet (essentially like a reverse proxy), but there are obvious vital security considerations with that approach.

They’ve even put programmer socks behind subscriptions, world is a fuck